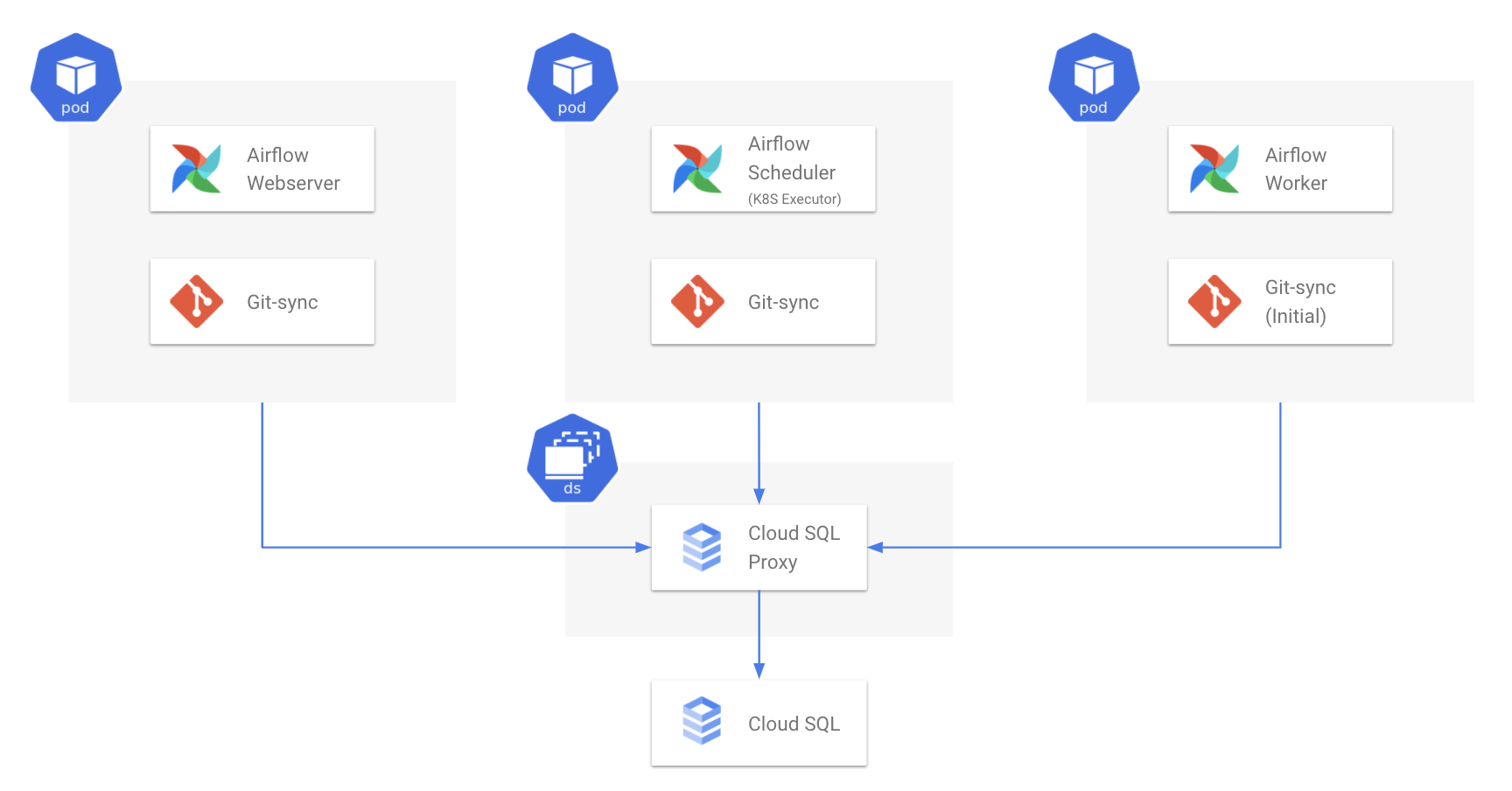

Using git sync to deliver Airflow DAGs is common practice, which to my taste looks like too much flexibility, opening the door to hard to debug inconsistencies. Here no Persistent Volumes were created, but we can do it in case we want to save/archive logs (alternatively, Airflow could be configured to save logs in Google Cloud storage, Elastic Search, etc) or cache some static data. In our case Headless Service just gives pods distinct networking identity (worker-0, worker-1, etc) and simplifies local logs access. Headless service could be used when cluster IP and load balancing between pods is not necessary (only the list of pod IPs is of the interest). For this purpose, Stateful sets were used in combination with Headless Service for workers (notice clusterIP is None): apiVersion: v1 kind: Service metadata: name: worker spec: clusterIP: None selector: app: airflow tier: worker ports: - protocol: TCP port: 8793 To run Airflow, a few pods should be scheduled, most notably - scheduler, webserver, and worker pods. As Airflow is quite a complex platform, installation involves a lot of work on infrastructure: cluster should be created, Cloud Sql should be provisioned, SSD disk for Redis created, necessary Google APIs enabled, etc. To deploy to Kubernetes I use a Helm chart. In this post I describe how to install and configure Apache Airflow components on GKE: Celery Executer, Redis and Cloud Sql. Still it could desirable to be able to install Airflow on GKE from scratch for variety of reasons: some may strive to gain more flexibility, or you could be running Airflow on premises and just look for ways to quickly spin up a similar cluster in cloud to process some extra bulky data.

A few years ago Google announced Composer - a fully managed Kubernetes installation of Apache Airflow, which is a great way to start automating your ETL, ML and DevOps chores using Python and Google Cloud platform. Pay attention to the new-line at the end of the content as it might not work without it and that’s a very tricky bug to catch.Apache Airflow is a well known workflow management platform. In AWS Secrets Manager, create a new secret and as a content, copy-paste your Git private SSH key. This can safely be done using a combination of Kubernetes secrets and AWS Secrets Manager. It can be safely assumed that you don’t keep all your source codes publicly available, because of that, you need to provide a secret SSH key that Airflow will use to download the repository. Here, because our structure is a little bit more complex, we set it to sync everything within the root up to 5 nested folders. By default, Airflow will sync all dags located in tests/dags directory. If you are using the official Airflow Helm chart, enabling git sync is very easy, all you have to do is set the correct values in the values.yaml file.Īs a first step, you need to enable it, then select the correct git repository and target branch.

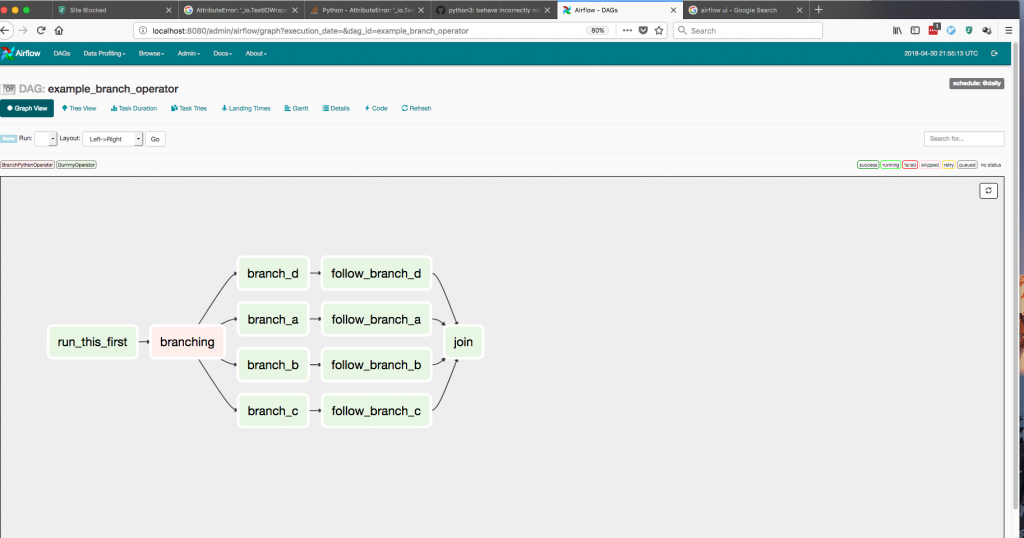

Simply speaking, Airflow will periodically check the git repository and if it detects changes, it will pull them, automatically updating your DAGs without any additional work. Git SyncĪirflow's git syncing is a very handy tool to enable GitOps over your DAGs. Most things will depend on your particular use case, but here we will take a look at some considerations. Of course, practically, there is a lot of configuration needed. Theoretically speaking, all you need to do is run the following command from your command line helm install airflow -namespace airflow apache-airflow/airflow HelmĪirflow contains an official Helm chart that can be used for deployments in Kubernetes. Let's look at some of its options and how it can be used along with MLflow on Kubernetes. In combination with EKS, Airflow on Kubernetes can be a reliable, highly scalable tool to handle all your data. Since its initial release in 2015, it gained enormous popularity and today it's a go-to tool for many data engineers. Airflow is an open-source tool that allows you to programmatically define and monitor your workflows. AirflowĪt Pilotcore, we often use Airflow pipelines in our machine learning projects along with MLflow for model management. After your cluster is up and running it's time to deploy the first resources to it, in our case Airflow and MLflow. In the previous article, we described the deployment of your own Kubernetes cluster in AWS using the Elastic Kubernetes Service (EKS). Want to get up and running fast in the cloud? Contact us today.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed